How energy fuels Nvidia's race to outpace physics

Plus: AI cracks nuclear resistance

Going to CERAWeek in Houston? Let me know! amy.harder@axios.com. Scroll to the end for a nature / humanity photo contrast.

I did a takeover edition of our Axios Future of Energy newsletter going deep into Nvidia. Check it out below and at this link.

Bonus: AI power demand cracks resistance to nuclear power

The AI boom is pushing one of America’s most venerable environmental groups to cautiously support nuclear power after decades of resistance.

Why it matters: The Natural Resources Defense Council’s position is both a sign of the urgent power demands that AI is creating and a larger shift underway among environmentalists to embrace an energy source many once rallied against.

Nvidia’s race to outpace physics

Nvidia’s chips are improving at such a staggering pace that it defies any historical comparison.

Why it matters: Without these gains — which are drawing increased attention as AI transforms society — physics would slam the brakes on the data center boom.

Driving the news: Nvidia CEO Jensen Huang said yesterday he expects the company to reap “at least” $1 trillion in revenue for its newest chips through 2027.

It posted record sales and earnings last month, fueled by skyrocketing orders from Big Tech data center companies.

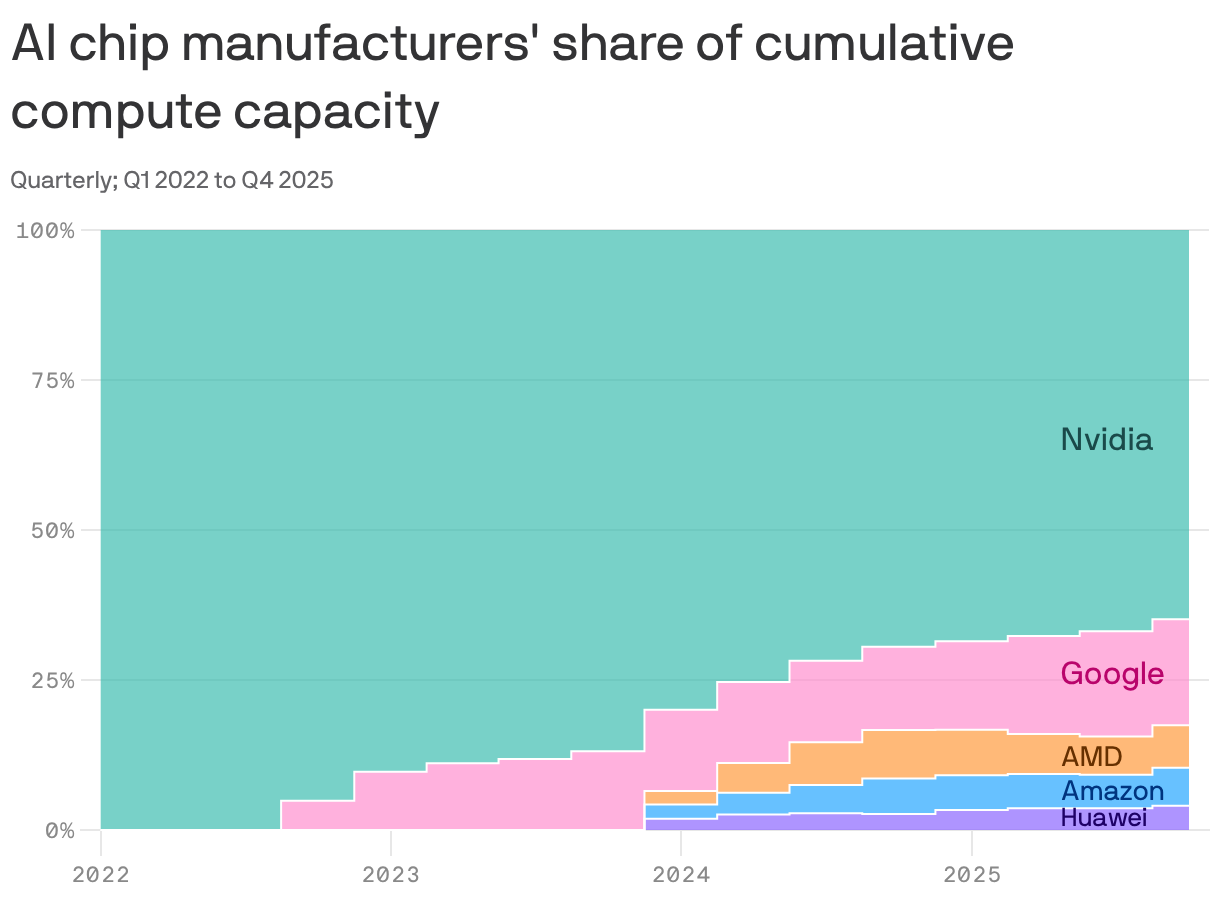

By the numbers: Nvidia has historically dominated the market, but its cumulative share has dropped from 100% in the first quarter of 2022 to 65% in the fourth quarter of last year, according to the research and consultancy firm SemiAnalysis (see below chart).

The big picture: The AI boom runs on electricity — and Nvidia’s chips determine how far that power goes.

Chips — flat, stamp-sized squares — are the beating heart of data centers.

Each new generation delivers dramatic gains in performance per chip — even as total AI energy demand keeps surging.

“Chips are being redesigned because efficiency determines how fast intelligence can scale,” Huang wrote in a rare blog post published last week.

“Energy becomes central because it sets the ceiling on how much intelligence can be produced at all.”

Zoom out: Energy efficiency has long been a boring but important component of technology.

We might think of energy-efficient appliances or a Toyota Prius — saving money and helping the planet.

But that’s not what’s happening with the AI boom. Energy efficiency is no longer a nice-to-have, it’s a must-have.

Electricity is physically limited. AI demand appears unlimited.

That makes energy efficiency the backbone of society’s astonishing growth in AI computing power.

The intrigue: It’s like going from a Model T to a Tesla in under a decade — instead of more than a century.

If cars’ fuel efficiency had improved as swiftly as chips, “we’d be driving to the moon and back in one gallon of gas,” said Josh Parker, head of sustainability at Nvidia, the world’s leader in AI computation.

Flashback: In 1865, British economist William Stanley Jevons observed that when England made coal steam engines more efficient, they actually used more — not less — coal.

This has become known as the Jevons paradox, where energy efficiency — inherently a term to describe saving energy — often creates more demand for energy.

AI is putting the Jevons paradox on steroids.

“The absolute footprint of AI, in terms of energy consumption, we do see it growing year over year, and we expect that trend to continue,” Parker said.

How it works: Two essential components of a chip’s efficiency are its energy consumption and how it’s cooled.

Physics dictates that electricity powering a chip ultimately becomes heat, which then needs to be cooled down.

Catch up quick: Cooling technologies broadly break down into two ways.

Traditional air-cooled data centers often rely on evaporative cooling, which can consume significant amounts of water.

Newer liquid-cooled systems can reduce that water use — though total demand still depends on design and location.

“If you have chips and servers, they’re useless if you don’t have power and you don’t have cooling,” said Rich Whitmore, who leads Motivair, Schneider Electric’s liquid-cooling business, which works with Nvidia.

Zoom in: Each generation of Nvidia’s chips — named after famous scientists — posts massive efficiency gains over the last.

The chip hitting the market today — called Blackwell — redesigned the whole architecture of computing to get more performance and efficiency, said Dion Harris, senior director of AI infrastructure at Nvidia.

Scroll down to go deeper on the different chips.

What’s next: If we went from a Model T to a Tesla in the past decade, imagining what comes next —in just the next few years — feels almost absurd.

“Maybe some kind of hovercraft,” Parker said, joking (maybe).

Illustrated: Nvidia's chip dominance

Nvidia dominates in cumulative chip sales since 2022, but competitors are encroaching, per data from research and consultancy firm SemiAnalysis.

Why it matters: If you lead on chips, you lead on everything.

What they’re saying: The continuous evolution of chips allows Nvidia to keep an edge ahead of competitors, analysts say.

The pace at which Nvidia releases chips “continually resets the competitive benchmark,” SemiAnalysis’ Ivan Chiam, said in an email to Axios.

“That speed of iteration makes it extremely difficult for competitors to close the gap, as they would need to execute flawlessly across multiple generations just to catch up.”

Yes, but: Nvidia faces a threat as the industry shifts from one type of AI — training — to another — inference. Its chips are optimized for the former.

“All this inference stuff is incredibly threatening to Jensen, because it’s all efficiency-driven,” Paul Kedrosky, a venture investor and fellow at MIT’s Initiative on the Digital Economy, told the WSJ. “He’s desperately trying to find a way to extend the franchise into inference.”

"50x" efficiency fuels AI boom: Inside Nvidia's chips

Nvidia is seeking to keep an edge over its competitors by rapidly innovating on its moneymakers: its chips.

Why it matters: Understanding the intricacies of something as arcane as computer chips unlocks the center of the AI boom.

State of play: Each new generation of Nvidia chips delivers dramatic gains in performance per watt — even as total AI energy demand keeps surging.

The intrigue: You’ve probably seen how cats fit into small boxes. Well, that’s kind of what’s going on with Nvidia’s quest to get ever-more computing out of its chips.

“We think of data centers as a box,” said Harris.

The question is always: “How can we squeeze more performance out of the same power envelope?”

How it works: Nvidia names its chips after famous scientists. Let’s meet them.

Hopper — named after computer scientist Grace Hopper — is in many ways the original AI chip.

Nvidia introduced it in 2022, and it still accounts for roughly half of all installed computing power today, according to estimates from SemiAnalysis.

Hopper systems were introduced into data centers that were still primarily air-cooled, though they can also be liquid-cooled.

Blackwell — named after mathematician David Blackwell — generates up to 50 times more performance per watt compared to Hopper.

This generation, introduced in 2024, completely redesigned the whole ecosystem to maximize the efficiency of the chip.

This includes introducing liquid cooling, which Parker says enables it to use 300 times less water compared to traditionally air-cooled systems.

This chip accounts for about half of all computing power today, a rapid rise from almost nothing in 2024, per SemiAnalysis.

Rubin — named after astronomer Vera Rubin — is expected to generate up to 10 times more performance per watt than Blackwell.

Rubin will complete a full shift to liquid cooling for Nvidia’s chips.

This generation is expected to account for just a small fraction of computing power this year, but by next year, it’ll account for more than half, SemiAnalysis projects.

The bottom line: “Understanding the pace we’re advancing these platforms is unlike anything we’ve ever seen in any other sector,” Harris said.

Meet the sustainability exec at the center of the AI boom

Josh Parker didn’t intend to work at Nvidia or even in sustainability — and yet finds himself at this precise intersection at a pivotal moment.

Why he matters: As head of Nvidia’s sustainability efforts, Parker oversees the behemoth’s efforts to contain its environmental footprint despite explosive growth.

Catch up quick: Parker joined Nvidia in August 2023, less than a year after the first ChatGPT model was released to the public and turned the world as we know it upside down.

“Working at Nvidia during the AI revolution, talking about AI applications for human good — it would have always been a dream job if I knew it was going to exist,” Parker told Axios.

Flashback: Parker was a lawyer practicing intellectual property. Sustainability came up kind of out of the blue for him in the first half of the last decade.

He was in Thailand working on behalf of Western Digital, a computer storage firm, when he got a call asking if he wanted to stand up a formal sustainability function at the firm.

Parker volunteered — even though, as he puts it, he “didn’t know what I was doing.”

The intrigue: He was trying to recruit someone from Nvidia to work at Western Digital, when a reverse poaching occurred, and he was recruited away to the chipmaker.

Context: The 47-year-old, originally from Oregon and now living in Denver, has a far lower profile than the sustainability leads at other tech firms.

As one limited barometer: He has 2,500 followers on LinkedIn, while the chief sustainability officers of many of Nvidia’s top customers — Microsoft’s Melanie Nakagawa, Amazon’s Kara Hurst and Google’s Kate Brandt — each have tens of thousands, if not a couple hundred thousand, followers.

These three are also regularly on the road doing public speaking.

Parker is ramping up how often he gives talks. “I would have been shocked,” he said, “to be doing so much public speaking.”

He says he enjoys it, but — as an introvert — he also needs time to recharge afterward.

Yes, but: Parker acknowledges that people have concerns both about the environmental footprint of the AI boom — and of the potential of AI itself to displace jobs and our humanity.

“This is a huge change in the world, and there are some near-term inevitable bumps in the road,” Parker said.

But he added: “I see more rays of sunshine than gray clouds in the future.”

The bottom line: “People are recognizing that this is the new normal,” Parker said. “AI is part of our lives now.”